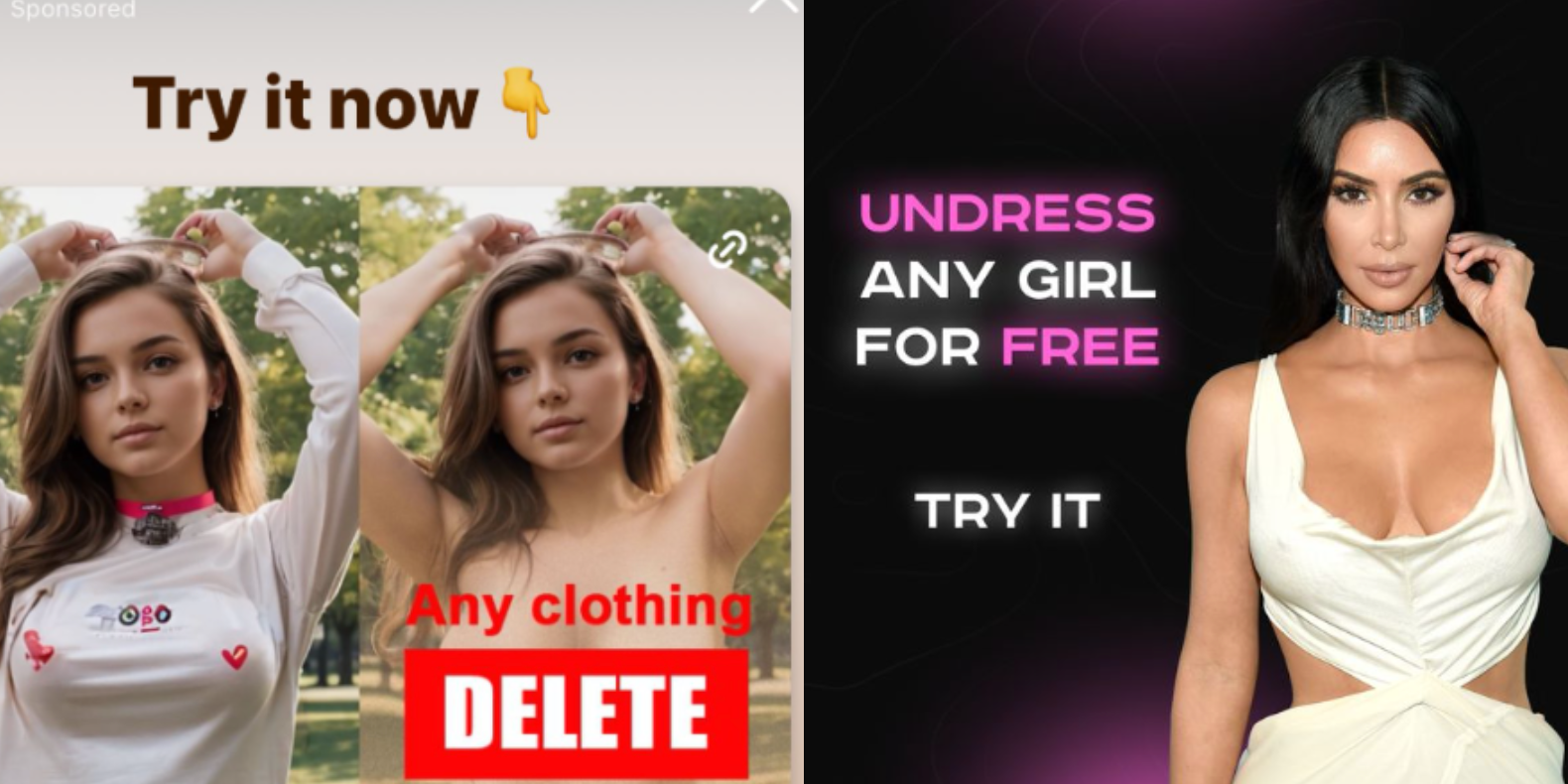

Instagram is profiting from several ads that invite people to create nonconsensual nude images with AI image generation apps, once again showing that some of the most harmful applications of AI tools are not hidden on the dark corners of the internet, but are actively promoted to users by social media companies unable or unwilling to enforce their policies about who can buy ads on their platforms.

While parent company Meta’s Ad Library, which archives ads on its platforms, who paid for them, and where and when they were posted, shows that the company has taken down several of these ads previously, many ads that explicitly invited users to create nudes and some ad buyers were up until I reached out to Meta for comment. Some of these ads were for the best known nonconsensual “undress” or “nudify” services on the internet.

Good, let all celebs come together and sue zuck into the ground

Its funny how many people leapt to the defense of Title V of the Telecommunications Act of 1996 Section 230 liability protection, as this helps shield social media firms from assuming liability for shit like this.

Sort of the Heads-I-Win / Tails-You-Lose nature of modern business-friendly legislation and courts.

Is there such a thing as a consensual undressing app? Seems redundant

I assume that’s what you’d call OnlyFans.

That said, the irony of these apps is that its not the nudity that’s the problem, strictly speaking. Its taking someone’s likeness and plastering it on a digital manikin. What social media has done has become the online equivalent of going through a girl’s trash to find an old comb, pulling the hair off, and putting it on a barbie doll that you then use to jerk/jill off.

What was the domain of 1980s perverts from comedies about awkward high schoolers has now become a commodity we’re supposed to treat as normal.

Idk how many people are viewing this as normal, I think most of us recognize all of this as being incredibly weird and creepy.

Idk how many people are viewing this as normal

Maybe not “Lemmy” us. But the folks who went hog wild during The Fappening, combined with younger people who are coming into contact with pornography for the first time, make a ripe base of users who will consider this the new normal.

Yet another example of multi billion dollar companies that don’t curate their content because it’s too hard and expensive. Well too bad maybe you only profit 46 billion instead of 55 billion. Boo hoo.

It’s not that it’s too expensive, it’s that they don’t care. They won’t do the right thing until and unless they are forced to, or it affects their bottom line.

An economic entity cannot care, I don’t understand how people expect them to. They are not human

Economic Entities aren’t robots, they’re collections of people engaged in the act of production, marketing, and distribution. If this ad/product exists, its because people made it exist deliberately.

No they are slaves to the entity.

They can be replaced

Everyone from top to bottom can be replaced

And will be unless they obey the machine’s will

It’s crazy talk to deny this fact because it feels wrong

It’s just the truth and yeah, it’s wrong

Everyone from top to bottom can be replaced

Once you enter the actual business sector and find out how much information is siloed or sequestered in the hands of a few power users, I think you’re going to be disappointed to discover this has never been true.

More than one business has failed because a key member of the team left, got an ill-conceived promotion, or died.

Well too bad maybe you only profit 46 billion instead of 55 billion.

I can’t possibly imagine this quality of clickbait is bringing in $9B annually.

Maybe I’m wrong. But this feels like the sort of thing a business does when its trying to juice the same lemon for the fourth or fifth time.